How Brands Build Human Trust in the Age of Agentic AI, Starting in 2026

Why Trust is the Real Constraint on Agentic AI Adoption

Agentic AI is no longer a backend efficiency story that stays hidden behind internal dashboards and operation’s workflows, where the impact is measured in time saved with ticket volumes reduced, and where most of the risk feels manageable because the audience is your own staff. That chapter is ending soon. Starting in 2026, more of agentic AI is stepping into public-facing roles, and that single shift changes the rules more than most organisations want to admit out loud.

Public-facing agentic AI is not just answering questions, or pulling up order status, or routing a complaint to the right support team. It is increasingly learning to interpret context, deciding which is more important, choosing a response, taking an action, and doing it all in real time. It can be calm, fast, and persuasive, but it can also be wrong, inconsistent, tone-deaf, or overly confident in ways that can develop into damaging a brand or a human representative that feels personal to the client.

This is where the conversation changes because the biggest barrier is no longer about computing power, model quality, or tooling maturity. The biggest barrier is human trust, and trust is far more fragile than most organisations are prepared for, especially in high-stakes environments like finance, healthcare, government-linked services, telco, education, and enterprise B2B.

There is a reason this feels different from the last wave of automation. Traditional automation followed rules. It was predictable in the way a vending machine is predictable because the logic was explicit, the flows were rigid, and the boundary of what it could do was clear cut. Today, agentic systems work differently. They learn to interpret, they adapt, and they behave like an interface between your brand and the public, which means every response becomes a micro moment of trust. Like blowing air bubbles and trying to keep it afloat without bursting.

Image sourced: https://www.pinterest.com/pin/17944098511211167/

Companies are discovering that the moment AI moves from internal experimentation to external exposure, they inherit a new responsibility. Every response, every automated decision, every escalation path, and every “I’m sorry, here’s what we can do” becomes a reflection of the organisation’s values, governance, and leadership.

This is not an AI problem. It is a human-led communications, leadership, and credibility problem.

And that is the uncomfortable truth that most tool-led transformation roadmaps do not highlight… because it is harder to sell governance and accountability than it is to sell “faster”, “cheaper”, and “automated”.

If you want a grounded way to explain this to leadership, consider inserting a simple evidence-backed chart here from a 2025 global trust study on AI because it helps to reframe the story. You only need to break the stigma and be presently aware of the public’s relationship with AI is “just cautious”, and that trust is not guaranteed simply because the technology works.

Why Search Visibility alone no longer Builds Trust

For years, digital credibility was anchored in search. If you ranked well on Google, appeared authoritative in media, or were referenced in industry reports, you were trusted by default, or at least you were given the benefit of the doubt long enough for a customer to click, browse, and form an opinion on your own turf.

That era is ending, and not because search is dead, but because trust formation has moved upstream and sideways. It now forms outside traditional search channels, and increasingly before people ever look you up. By the time someone searches your brand, the narrative is often already in motion.

Communities form opinions in Reddit threads, private messaging groups, Slack communities, LinkedIn comment sections, and niche industry forums, and those opinions move faster than formal communications’ cycles. AI-generated summaries and answer engines amplify sentiment rather than correct it. They do not politely pause to ask whether a public claim is fair, or whether a context is missing, or whether an incident was resolved because they synthesise what is available and what is repeated.

Agentic AI accelerates this dynamic because when users interact with an autonomous agent representing your brand, they are not judging the model. They are judging you as a brand, your leadership, your intent, and your competence. And they are doing it during a period of time, perhaps during a high peak search season that evokes emotions rather than being analytical.

If the AI agent dismisses the users in the funnels during their search journeys; they may get ignored by the company. If the agent miscommunicates, they can feel misled by the company and misleading is not a good word to toy around with when it comes to buyers’ purchasing decisions. If the agent sounds robotic or evasive, they can feel being put off or stonewalled by the company. If the agent escalates too slowly or too aggressively, they may feel unsafe or possessed distrust in the company. The customer does not separate “the AI layer” from “the real brand” because in a public-facing context, the AI layer is part of the brand (like an extension of a limb).

This is why many organisations feel uneasy right now. They sensed the shift, but they are still optimising for the old rules, which is why so much effort goes into visibility, traffic, and content production, while the trust infrastructure behind public-facing systems remains vague, underfunded, or delegated to someone else who can pull this through or not.

If your content strategy team still assumes that ranking equals credibility, you are already late not because you did something wrong, but because the environment has changed. Trust has always been social-first, and search-second, which means the job is no longer about “getting discovered”. The job is about “being trusted when discovered”.

The Quiet Anxiety Inside Enterprises

Across Singapore, APAC, and global enterprises, a quiet pattern is emerging, and it tends to show up first in the places where people do not perform with great confidence. You see it in leadership Q&A sessions, in procurement checkpoints, in risk reviews, and in the tone of internal meetings where teams discuss “pilot outcomes” but still hesitate to go live.

Some companies have invested heavily in AI tooling, automation platforms, and agent frameworks, yet decision makers remain hesitant to push these systems into customer-facing roles. The hesitation is regarded as being technical, whereas, emotional and reputations influenced how leaders feel with risk oversight.

A single positive AI interaction rarely becomes a headline because it is highly expected that companies should deliver competent services. A single negative AI interaction can lead into becoming a GIF meme, a screenshot, a Reddit thread, a headline, or a cautionary tale. Even when the facts are incomplete, the sentiment can form a vague public picture to be complete enough to harm credibility.

Leaders are asking questions they did not have to ask before, and these questions are not hypothetical.

What happens when an AI agent makes the wrong call publicly, and the customer acts on it?

Who is accountable when an autonomous system miscommunicates, even if the source content was correct?

How do we correct misinformation fast enough when the system itself moves faster than humans?

What happens when the agent’s tone clashes with the brand’s values, and the public interprets it as culture?

What happens when a competitor’s customers bring your agent’s responses into their own community spaces, and you lose narrative control without even knowing it happened?

These are operational fears rooted in real incidents, real backlash, and real loss of trust.

And if you are reading this as a marketing or communications leader, you already know the hardest part. It is not cleaning up s**t after the incident. It is proving beyond after-the-fact incident, where the organisation deserves to be trusted again. Also, trust is not formed in a logic algorithm, and in the way a dashboard is logical. Trust is emotional, contextual, and cumulative, and it is shaped as much by how you respond, as well as by what happened in the first place.

Public-Facing Agentic AI is a Trust-Stress Test

Agentic AI introduces a structural change. Traditional automation followed rules, which meant your risk profile was mostly about whether the rules were correct, whether the integration worked, and whether edge cases were handled with a fallback. Agentic systems interpret context, act independently, and adapt over time, which means your risk profile shifts toward behaviour, judgement, and alignment.

This autonomy is powerful, but it also removes the comforting illusion of full human control. In internal settings, teams can tolerate that uncertainty because there is organisational context, shared language, and a built-in assumption that mistakes will be fixed quietly. In public settings, uncertainty feels like negligence because customers do not want to be your “guinea pigs” in a test environment meant for your learning curve.

When an AI agent responds to a customer complaint, moderates a discussion, or makes a recommendation, the public does not see “an experiment”. They see a decision in real time, an action executed in your brand voice, and the organisation’s values in motion.

Trust does not break because the system is imperfect, but because the organisation behind it appears unprepared, evasive, or disconnected from humans’ accountability and responsibility. People can forgive an honest mistake. What they struggle to forgive is a company that seems to hide behind technology, as though “the AI did it” is an acceptable explanation for keeping up in the harm’s way.

This is why public-facing agentic AI is not just another channel. It is a stress test of your organisational’s AI index maturity, because it reveals whether you can operate with transparency, responsibility, and speed, without sacrificing the human elements that embossed “trusted by many clients and partners”.

My Personal Anecdote: Trust was always Built by Consistency, Not Built by Tools

I once worked through a real-world crisis communications incident long before ChatGPT, agentic systems, or real-time AI summarisation existed, and I still remember what it felt like to watch a narrative take on a life of its own while we tried to keep up with nothing but human eyes, human judgement, and raw endurance.

There were no comprehensive dashboards to tell us where sentiment was heading, no automated monitoring to catch a spark before it became a fire, and no neat “single pane of glass” that made the chaos legible, so we did what teams used to do when the Internet moved faster than corporate workflows, which was to split platforms manually and track everything in parallel. From news sites, to forums, to chatboards, to social media feeds and anonymous comment sections that felt like they were multiplying by the hour.

The volume was relentless, but the emotional weight was worse because misinformation travelled faster than clarification ever could, and public emotion was mutated by the hour. And it was not just based in the content of what people said, but in the tone, the assumptions, and the certainty with which strangers concluded what was true, what was false, and who deserved to be blamed.

What stayed with me was not the workload. It was the uneasy realisation that credibility can be lost in minutes and rebuilt only in months or years, and that people do not always wait for the full story to form a judgement because when humans feel uncertain, they reach out for something that’s in the opposite direction, and meaning often comes in the form of narrative, and hardly from evidence.

A few years later, I caught something similar but from a completely different angle that is not in a crisis war room. It was something that felt far more personal and far more joyful, which was building and growing my cat’s Instagram account as a former micro influencer.

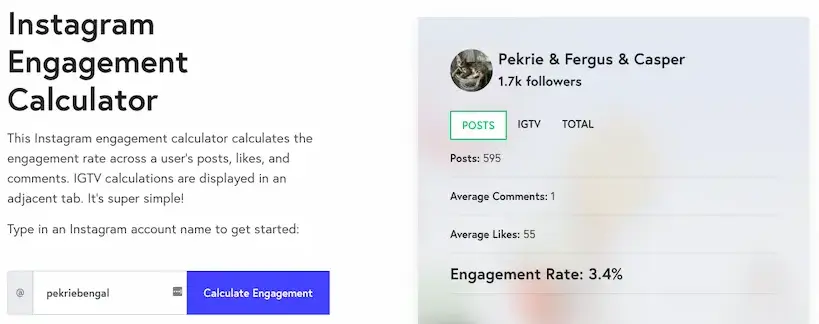

Back then, I grappled onto the ropes of self-learning curve and grew an organic following of around 2,000 followers on my cat’s Instagram page. There were no jump-through-hoop tricks, not through buying attention, and definitely not because I had the perfect hashtag strategy. Hashtags were always secondary to the real growth engine that made general users and my followers stay to catch almost every post that was consistent, came with authentic storytelling that made them feel like they were part of something warm and real. In fact, being a part of my cats’ growing-up journey. Here’s the full view of my cats where I started building and growing their Instagram account in 2016.

It started with something simple and honestly pure. I was inspired by the everyday beauty of owning, caring for, and playing with my cats, and I found myself capturing their playful, innocent, and sometimes hilariously “cat-political” moments. The kind-of-moment that only makes sense when you truly live with a cat and start noticing how much personality they pack into silence, side-eyes, and tiny rituals. And somehow, being able to share those moments with the world became one of the most unexpectedly beautiful things I have had to experience. Of course, the interactions were more beautiful when my followers live with their cats as well, and we bonded over several posts.

The growth came from daily posting, but more than that, it came from the feeling of continuity because people trust what they can follow, and they follow what feels consistent, honest, and human, even when the subject is a Bengal cat or a mixed Mainecoon and Bengal cat with a strong personality and opinion about everything.

And then life happened the way it always does. Work commitments clamped down, energy got stretched thin, and I no longer had the same capacity to post regularly, which meant I could feel the difference immediately. Not because the algorithm suddenly changed, but because when consistency disappears, the relationship weakens even when the audience still likes you, and even when your content is still good.

I still miss running the account the way I used to, and I do try to update it once in a while but that experience gave me a lesson I trust more than most industry advice because I lived it in real time.

It taught me that trust is not built by optimisation. It is built by the rhythm of life. Always show up not only when it counts, but whenever it matters, be it under the sun or rain.

It is ingrained and built by showing up consistently enough that people begin to recognise your voice, your intent, and your pattern of care, and it is strengthened every time you tell the truth in a way that feels grounded, relatable, and believable.

This is exactly why trust becomes the defining constraint when agentic AI moves into public-facing roles because the brands that win will not be the ones shouting the loudest about “responsible or ethical AI” or stacking the most impressive tools, but the ones that commit to constant authentic storytelling across their channels in a way that makes customers and communities feel they know who you are, what you stand for, and what you will do when something goes wrong.

Because when AI begins speaking on your behalf, people will not judge you by the sophistication of your stack.

They will judge you by whether they trust your story. And in 2026, storytelling is not a marketing aesthetic. It is the pathway to credibility.

Why Tool Sophistication does Not Equal Trust

One of the most dangerous assumptions companies make is believing that better tools automatically lead to better outcomes because this assumption feels reasonable in procurement discussions, change management and transformation narratives. You buy the platform, you integrate the system, you train the staff, and the value appears. In reality for some, unused software licenses sit quietly every month, draining operating budgets like invisible electricity leaks. AI subscriptions renew, automation platforms remain idle and dashboards go unchecked.

The waste is not just financial = It is cultural.

When teams do not understand how AI fits into their workflows, or why it exists beyond experimentation, trust erodes internally first, and internal trust erosion always becomes external fragility later. Employees stop believing in transformation narratives long before customers do. This is because they see the gap between the story and the daily reality. They see the “AI initiative” celebrated while the underlying data hygiene remains messy. They see dashboards that do not match what Sales teams hears. They see tools getting blamed when the real issue is ownership and the due diligence of a gatekeeper.

I have sat with teams surrounded by advanced AI tools, watching them trying to translate any of it into real operational value. Once a vendor walked through a single, narrow use case, automating customer-facing platforms for follow-ups to prospects, conversion rates hardly shifted within weeks. The tools were never the problem. Interpretation was, and so was the absence of clear decision ownership.

This is why public-facing agentic AI cannot be approached as “another capability”. It must be approached as a system of trust, which means you do not start with features. You start with intent, accountability, and guardrails.

How Human Trust is Built in the Agentic Era

Trust in the age of agentic AI is built through signals, and not promises because promises are cheap in an era where every vendor can generate convincing sales pitch decks, and where every organisation can publish a glossy statement about “responsible and ethical AI” without showing any clear evidence of what responsibility looks like operationally.

The signals that build trust are visible, repeatable, and hard to fake over time.

– Ladyintechverse

Clear accountability signals show that there is a human behind the system who is responsible for outcomes, not just for uptime.

Visible leadership involvement shows that the system is not a side project, and that the company understands the reputational stakes.

Transparent escalation paths show that customers are not trapped inside automation routes when the situation requires empathy, nuance, or discretion.

Consistent tone and behaviour across channels shows coherence, which is one of the strongest trust cues humans have because inconsistency can read as manipulation or confusion.

People do not expect perfection from AI. They expect honesty, coherence, and responsibility from organisations, especially when things go wrong. If your agent makes an error, the public is watching whether you correct it, whether you acknowledge it, and whether you learn from it without becoming defensive.

This is why companies that rush into public-facing AI without rethinking communications, governance, and leadership involvement often struggle. Agentic AI magnifies whatever already exists. Strong cultures scale. Weak ones fracture.

And here is the part most teams do not want to hear, but need to hear anyway. Trust is not built by saying “this is safe”. Trust is built by showing what happens when it is not safe, and proving that you have designed for that reality.

Case Study: Deloitte Australia’s Hallucination Moment, and Why Compliance is Taken for Granted

A few months ago, a real world example of how quickly trust can fracture a consulting firm showed up in Australia, and it is the kind of incident every enterprise should treat as a warning, not as a gossip. Deloitte Australia delivered a report for an Australian government department that later drew scrutiny for multiple inaccuracies that looked like classic generative AI hallucinations, including fabricated citations and references. The story escalated because the document was not a casual blog post. It was a formal report tied to public-sector accountability, and that context changes everything. When the errors surfaced, Deloitte agreed to partially refund the government, and a revised version of the report was issued with misleading references removed.

What matters here was not the internet drama. What matters was the operational lesson: in the agentic era, trust breaks at the citation layer first, because citations are how institutions signal serious accountability. Once references are unreliable, the reader stops evaluating the argument and starts questioning the integrity of the process. And that is exactly where compliance becomes a frontline function. A strong compliance and governance layer is what forces the unglamorous questions that protect credibility: Where did this claim come from? Can we verify it? Who approved it? What is the escalation path if it is wrong? In Deloitte’s case, reporting indicated the work used a generative AI tool, and the post-publication clean-up became part of the story because the human-in-the-loop controls were not visible upfront.

So when I say trust is built by showing what happens when it is not safe, this is the proof. The public does not need you to be perfect. They need you to be auditable, accountable, and human-led responsibility when it counts, especially when AI is involved. In 2026, any organisation deploying public-facing agentic AI without a compliance-grade verification workflow is not moving fast, as they are exposed at the very levels when gravity does not exist anymore. How are they going to explain that?

The Trust-Led Operating Model that most Brands are Missing

If you want to make this practical, you need an operating model that treats public-facing agentic AI like a reputational product, and not like a software feature. A reputational product has inputs, outputs, quality controls, and incident handling, but it also has tone, expectation-setting, and moral clarity because public trust is shaped by what people believe you stand for.

A trust-led operating model usually includes five layers that work together, whether you call them this or not.

1) The intent layer

This is where you define, in plain language, what the agent is allowed to do, what it is not allowed to do, and what values it must prioritise when trade-offs appear. Intent must be written so clearly that it can survive internal hand-offs because if intent lives only in someone’s head, it will not survive at scale without any stress tests.

2) The accountability layer

This is where you assign a named owner, not a committee, and you make that ownership visible internally. The owner is responsible for outcomes, escalation, and risk posture. This does not mean one person does all the work. It means one person is answerable when ambiguity appears.

3) The governance layer

This is where you define review cycles, approval paths, policy alignment, and the boundaries of what the agent can access and act on. Governance is not there to slow down innovation. It is there to prevent the kind of public harm that destroys long-term adoption.

4) The communications layer

This is where you define the voice, the tone, the disclosure strategy, and the “human reassurance” patterns that customers need in moments of frustration, uncertainty and distrust. Communications cannot be bolted on after the model is deployed because the first time a customer meets your agent is a first-impression moment (like meeting a human representative for the first time that left you a lasting impression), not a technical-first demo.

5) The measurement layer

This is where you decide what you will measure that actually reflects trust as not just an activity. Trust signals include complaint resolution satisfaction, escalation success rate, repeat contact reduction without deflection, sentiment shifts in community spaces, and the speed and clarity of corrections when incidents occur.

The brands that treat these layers as optional usually end up paying for the additional costs much later. Even if the brand is going under a “pressure cooker situation”, or in a crisis, and when they would rather not be improvising.

Why CEOs and C-Suite should Not Stay Invisible

In moments of uncertainty, silence is interpreted as avoidance. That is not because the public is unreasonable, but because people have learnt through repeated experiences that companies often hide behind processes and jargon when they are not prepared to be accountable.

Public-facing AI systems require executive ownership, and not delegated oversight. When something goes wrong, audiences want to know who stands behind the system, not which vendor supplied it, and they want to know whether leadership understands the impact beyond PR risk.

Leadership visibility is not about issuing statements. It is about signalling responsibility in advance, so that when something happens, your response is not the first time the public sees that you “actually” care.

In practice, this looks like a CEO or C-suite leader doing three things consistently.

First, they sponsor the trust model, which means they fund governance, data quality, escalation capacity, and communications readiness, rather than treating those as “overhead”.

Second, they endorse transparency, which means they accept that responsible AI includes disclosing limitations, explaining safeguards, and acknowledging incidents when they happen.

Third, they empower fast correction, which means they reduce internal bureaucracy so teams can respond within hours.

The brands that retain trust in 2026 and beyond will be the ones where leadership understands that AI is no longer just an operational layer. It is a reputational one indeed.

From Tool-Led AI to Trust-Led Systems

The future does not belong to companies with the most AI agents. It belongs to companies with the clearest intent.

Tool-led adoption often looks like this: a new feature appears, competitors talk about it, vendors promise advantage, and teams rush to implement it, hoping the capabilities will create clarity after-the-fact. Trust-led adoption looks different. It begins with decisions. It asks what the system must do, what risk it introduces, who is accountable, and how the brand will behave when uncertainty appears.

Agentic systems must be designed with human-in-the-loop checkpoints, ethical review, communication alignment, and escalation protocols that reflect real organisational values, rather than vague principles that nobody can enforce.

This is where many B2B organisations are currently stuck. They know what AI can do, but they are unsure how to let it speak on their behalf, and that hesitation is not weakness. In many cases, it is wisdom because it recognises that automation is easy but trust is expensive.

If you want an honest rule that helps teams decide when to proceed, use this: if you cannot describe in a single page on how the agent behaves under stress, you are not ready to deploy it publicly.

Why Communities Decide Before Search Engines Do

Trust is now shaped socially before it is verified technically, which means the narrative about your brand can be formed in places you do not control, in languages you would not choose, and in interpretations you might disagree with. By the time a customer searches for your brand, the narrative is often already formed, and AI summaries will reinforce what communities are already saying.

This is why brands must think beyond optimisation and visibility. They must think in terms of relationship architecture, which means building trust where people actually gather, not just where marketing teams are comfortable measuring impressions.

Relationship architecture includes community listening, participatory engagement, credible human faces, and responsive communication patterns that do not rely on perfect scripts. It includes showing up when you are not selling anything because that is how trust is built. It includes being consistent in how you treat people because communities notice patterns, and communities remember.

Agentic AI must support this architecture, and not undermine it. If your agent becomes a deflection machine, communities will call it out. If your agent becomes a tone-deaf gatekeeper, communities will screenshot it. If your agent becomes a “polite wall” that prevents helping humans, communities will turn your brand into a cautionary tale, and AI systems will later summarise that sentiment as though it were consensus.

This is why trust strategy must be social, operational, and human, not merely technical.

The Real Risk of Moving Too Fast

The danger is not that AI will replace humans too quickly. The danger is that organisations will deploy systems without understanding the social weight those systems carry.

When you put an agent in front of the public, you are not only deploying software. You are deploying a relationship agent with a voice. You are also deploying a set of behavioural promises, even if you never write them down.

Trust is not programmable. It is earned through consistency, humility, and accountability.

Consistency means your system behaves in line with your values, not only when things are easy but when a customer is frustrated, when a situation is complex, and when the safest response is to escalate. Humility means you acknowledge limitations without hiding behind buzzwords, and you prioritise the customer’s reality over your internal convenience. Accountability means you do not blame the model, the vendor, or “unexpected outputs” because the public does not care about your AI stack. They care about the impact your brand brings in reality.

Moving too fast often looks like pushing public-facing automation before you have done four unglamorous things: defining escalation paths, preparing the comms playbook, training the human team on how to manage and intervene, and stress testing the system in realistic and relevant scenarios.

If you skip these steps, you are launching into a new environment that is already sceptical, and your margin for error becomes dangerously thin.

A Practical Trust Framework for Public-Facing Agentic AI

If you want something you can use, something you can agree with, here is a trust framework you can implement without turning it into a “several months strategy theatre exercise”. Think of it as a baseline system that makes your agent safer, your brand clearer, and your response faster when something goes wrong.

1) Design the “truth limitations” before you design the features

Define what the agent can claim, what it must verify, and what it must never assume. This includes sensitive topics, regulated advice, pricing promises, refund commitments, and anything that could create legal or financial harm. Your goal is not to make the agent silent, but to make it honest.

2) Build a visible escalation bridge, not a hidden escape hatch

Customers should not have to “fail” the agent to reach a human. The escalation path should be normalised and easy to understand. When the agent escalates, it should carry key context forward, so the customer does not repeat themselves because repetition is where frustration turns into hostility and allows “customer churn” to creep into your sales pipeline.

3) Create a “tone policy” that is human and not corporate-y

A tone policy is not a list of approved adjectives. It is a set of behavioural rules. For example, the agent should acknowledge emotion, avoid defensive language, making the customer feel wrong, and overpromising. The agent should be eager, calm and specific, and not too cheerful and vague.

4) Put monitoring where reputation forms, not only where traffic forms

Monitoring ground sentiments cannot live only in analytics dashboards. It must include the community spaces where your narrative forms, including forums, social platforms, review sites, and discussion threads. You do not need to control these spaces, as you only need to listen to them with enough consistency that allows you to detect shifts before they become crises. Of course, there is no 100% transparency in dark social messaging applications. These conversations can be carried through personal WhatsApp, Telegram, Snapchat, Slack, Discord, etc.

5) Run quarterly incident drills like the way you run fire alarm drills

Treat incidents as operational realities.

- Run simulations.

- Practice responses.

- Practice escalation.

- Practice apology language.

- Practice corrective messaging.

- Practice internal alignment so you do not waste precious hours debating what to say while the public narrative grows.

This is how trust becomes a structured system.

Closing Reflection: The Moral of the Story

The moral of the story is not that AI is dangerous, or that only academics can find errors hidden in long reports, even though there is truth in the idea that careful readers spot what busy organisations miss. The deeper moral is that speed has become cheap, and credibility has become expensive.

In practice, organisations must pair powerful agentic tools with rigorous human verification. Researchers, reviewers, and internal subject-matter experts must interrogate outputs, check assumptions, and validate conclusions before anything reaches the public domain. You do not do this because you are exhausted with the uncharted innovation routes. You do this because you respect the public and the legalised institutions.

AI can accelerate thinking but it cannot replace responsibility, and the more public-facing your systems become, the more your brand will be judged on how you behave under pressure. Especially when credibility, public confidence, and long-term trust are at stake.

That is the future LadyinTechverse stands for. And no! Not as a buzzword sanctuary but as a practical one, where clarity is a discipline, and trust is treated as the real operating constraint that is not an afterthought.

Download Agentic AI Readiness Checklist 2026 by LadyinTechverse (.XSLX)

I am feeling generous, thus, there is no form for you to fill in this time around. Happy analysing! 😅

file size: 40kb from a shared Onedrive folder

Frequently Asked Questions (FAQ)

Internal Articles

- Agentic AI in 2025: Ripples that Signal the 2026 Workflow Tsunami

- Digital Trust in 2025: Governance and Security Shaping the Next Economy

- Data Quality is the Power Move behind every winning AI Strategy in 2025

- How can CEOs use AI and Leadership to improve Crisis Communications in 2026?

- Why more than 90% of AI Pilots Fail and How Hyper-Personalisation Wins

- AI Overviews are Reducing Your Clicks: How Brands stay Visible when Search stops sending Traffic

Sources Referenced

- 2025 global trust evidence https://assets.kpmg.com/content/dam/kpmgsites/ae/pdf/trust-attitudes-and-use-of-ai-executive-summary.pdf.coredownload.inline.pdf

- Deloitte Australia case study for “trust breaks at the citation layer first” https://apnews.com/article/ab54858680ffc4ae6555b31c8fb987f3

- Compliance Week’s Risk Management https://www.complianceweek.com/risk-management/ai-hallucinations-in-deloitte-australia-report-highlight-important-role-for-compliance/36302.article

- Edelman Trust Barometer 2025 https://www.edelman.com/sites/g/files/aatuss191/files/2025-01/Global Top 10 2025 Trust Barometer.pdf

Visual Content Disclaimer: All images in this post are AI-generated.

How Brands Build Human Trust in the Age of Agentic AI, Starting in 2026

#LadyinTechverse #DigitalSanctuary #AgenticAI #HumanTrust #AIandBusiness #AIStrategy #CorporateCommunications #DigitalTransformation #TrustEconomy

Leave a Reply