AI Intensifies Work and Multiplies Risk According to HBR’s 2026 Governance Research

AI Did Not Remove Your Work

It moved the work somewhere you were not prepared to manage.

Harvard Business Review’s February 2026 research landed on something most teams have been feeling for months but struggled to articulate properly. AI changes what work becomes and where effort gets redistributed across your operations. You produce more output faster, which sounds brilliant in theory until you realise the oversight workload expanded right alongside it. To add, more coordination is required across business units, unexpected validation against standards that nobody documented properly, and even more documentation to prove that everything is safe, accurate, and compliant when someone starts asking hard questions three months after deployment.

If you have watched a team adopt automation with genuine excitement only to spend late nights and weeks later cleaning up data mismatches and rewriting approval processes nobody bothered documenting the first time around, you already understand this pattern intimately. AI fundamentally shifts where human effort concentrates in your workflows. The output phase accelerates dramatically and measurably. The verification, governance, and accountability phase grows heavier in ways most organisations never budgeted for properly or even considered during pilot phases. Without solid governance infrastructure built from day one, AI-intensified work becomes AI-intensified risk that nobody wants to own when systems break or regulators start investigating what went wrong and who failed to prevent it. Are we heading towards “AI burnout” stage?

Why Agentic AI Changes Everything About Oversight

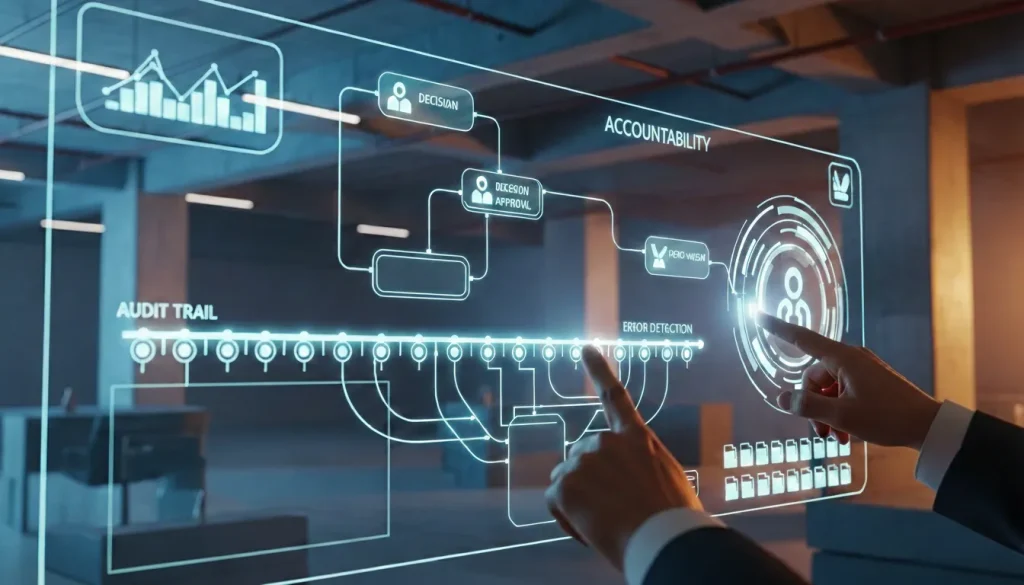

Generative AI suggests content and provides drafts that humans review carefully before anything goes live to customers or stakeholders. Agentic AI executes tasks autonomously across multiple systems without waiting for explicit human approval each time an action needs to happen. That shift from “assist” to “act” is where risk profiles change completely and governance requirements expand exponentially. When systems start making decisions and taking actions affecting customer data, financial records, or compliance obligations, you need traceability infrastructure answering hard questions under pressure from auditors, regulators, or anxious leadership teams. What specific data did the system access during processing? What action did it take and when did that action occur? Who approved the business rules and decision logic the system operates under? What happens when outputs create downstream problems affecting customers or other departments?

A marketing team producing fifty campaign variations instead of two still needs someone validating brand consistency, legal compliance, and factual accuracy across all versions before anything reaches customers or scales across channels. A sales team automating CRM updates at scale discovers later that one incorrectly mapped field propagated silently across 500 customer accounts, creating a reconciliation nightmare requiring weeks to fix properly, whilst also managing customer confusion and frustration. A finance department deploying AI invoice extraction assumes the challenge is solved and resources can be reallocated elsewhere, until anomalies start appearing in reports and auditors start asking pointed questions about why the system cannot explain how it arrived at specific numbers or who validated the decision logic behind processing rules that everyone assumed were working correctly.

When AI acts autonomously across your systems, responsibility does not evaporate into cloud infrastructure somewhere beyond your control. Responsibility surfaces as growing review queues, escalation protocols nobody designed properly, monitoring dashboards showing patterns nobody understands fully, and governance frameworks that many teams never needed before because manual workflows had built-in human checks at every stage of processing. Understanding how AI agents can drive profit without sacrificing oversight becomes critical when scaling automation across business-critical workflows where mistakes compound fast and recovery becomes expensive.

The Efficiency Trap Nobody Talks About Honestly

Here is what catches almost everyone off guard during AI deployment. AI makes output cheaper and faster to produce, so leadership naturally expects more output from the same team using existing resources and headcount allocations. When content generation speeds up through automation tools, they request more versions, deeper personalisation across segments, and additional channels that were previously too resource-intensive to manage properly. When reporting can be automated through dashboards and scheduled workflows, they want more granular segmentation, different analytical cuts for various stakeholder groups who all want slightly different views, and real-time updates replacing monthly summaries. When workflows can be handled by systems instead of humans manually processing each step, they assume throughput can increase significantly without additional infrastructure investment or support resources to manage the expanded scope.

This is how work intensification happens through decisions that sound completely reasonable in strategy meetings and board presentations where everyone nods approvingly. Nobody explicitly announces “let’s burn out the team until they start resigning and we face retention problems”. Instead, they frame it positively as “now that we have AI handling routine tasks efficiently, we should deliver more value with current capacity and demonstrate ROI to justify continued investment.” Then the hidden work surfaces like an iceberg, which nobody mapped properly during planning phases. Someone needs to validate AI output against brand standards and accuracy requirements that keep evolving. Also, someone needs to handle edge cases the system was never trained to recognise or process correctly. Another person needs to confirm workflows did not break silently overnight when a supplier changed their data format without advance notice or coordination. And leadership teams maintain business rules to keep systems aligned with evolving requirements and regulatory changes. Someone needs to prove compliance when regulators arrive asking detailed questions about decisions, data handling practices, and who approved what parameters.

The productivity equation becomes messy and uncomfortable because output genuinely increased in measurable ways that look impressive in quarterly reports. The challenge is that oversight workload increased alongside it, sometimes exceeding the efficiency gains that leadership initially celebrated during rollout announcements. According to HBR’s research, organisations consistently underestimate the coordination work required to manage autonomous systems safely at scale across departments and workflows. Teams discover this reality after deployment, when exception queues grow exponentially and stakeholders demand explanations nobody prepared for during the exciting pilot phase where everything seemed promising and manageable. Learning why agentic AI will transform workflows in 2026 helps you see why governance cannot be retrofitted as an afterthought when systems are already running in production, affecting real business outcomes.

The Governance Gap that Creates Expensive Risk

Most organisations have tools, subscriptions, pilots running across different departments, and internal enthusiasm for AI initiatives showing up in budget requests and transformation roadmaps. What they often lack is traceability infrastructure connecting all the moving pieces into coherent accountability that survives scrutiny. If your AI workflow touches customer data, financial records, or compliance-related processes that regulators care about, you will eventually need audit trails answering what data the system accessed, what actions it took autonomously, who approved the parameters and business rules governing behaviour, what changed over time and why those changes happened, and who is accountable when outputs cause problems affecting customers or business operations in measurable ways.

In regulated sectors like finance, healthcare, logistics, and enterprise B2B where contracts carry legal weight and violations trigger penalties, tolerance for unexplained automation is low and getting lower as regulators worldwide catch up to deployment reality. Governance is not bureaucracy designed to slow innovative teams down or kill good ideas with process overhead that frustrates everyone. Governance prevents intensified work from becoming intensified reputational damage, regulatory fines that affect quarterly performance, customer trust violations that take years to rebuild, and expensive cleanup requiring months to resolve properly when proper infrastructure could have prevented the crisis entirely with upfront investment.

My Personal Anecdote

Some years ago, I found myself in the back office of a major supermarket chain in Singapore (cannot be named) because a stretched-thin staff member let me grab missing stock supplies myself rather than having to wait as it was around their opening time. What I saw genuinely shocked me. There were papers everywhere. Clipped receipts stuck to walls with peeling tape, handwritten forms pasted near workstations, inbox trays overflowing with flimsy multicoloured carbon copy invoices that looked ready to disintegrate if you breathed on them too hard, and stock supply forms stacked in piles with no consistent organising principle beyond “deal with it eventually.” Out front, this chain had sleek self-checkout kiosks, mobile payment integration, and digital loyalty programmes tracking your purchase history with impressive accuracy. Beyond three metres behind the scenes, the operational reality was carbon paper, tape, and manual reconciliation processes that probably have not changed fundamentally in two decades despite all the digital transformation rhetoric in their annual reports. That contrast is not unique to retail, as it is everywhere across FMCG, logistics, and any industry with blue-collar physical operations that leadership does not see or prioritise during transformation planning. Where customers interact with get automated and polished, but the suppliers and vendors part, customers never get to see until the foundation becomes so brittle that automation cannot safely scale on top of it.

Why Paper Processes Block AI ROI Completely

Let me be honest about something. If you are familiar, vendor demos carefully avoid showing you during polished sales presentations. Operations teams still process actual paper invoices right now across multiple industries and company sizes that would surprise you. Physical thermal receipts that fade completely over time until they become unreadable and unusable for verification. Courier-delivered documents arriving creased, torn, soaked or coffee-stained, and barely legible even when fresh from printing. Folders are organised manually by month sitting on desks and in filing cabinets because digitisation budgets were proposed years ago, postponed repeatedly for more urgent priorities, then quietly forgotten whilst leadership moved on to more exciting technology discussions sounding better in board meetings, and investor updates that generate positive headlines.

Logistics departments still file printed delivery orders in physical storage systems because infrastructure upgrades never received proper investment over the years, and everyone just adapted around the limitations that became normal. FMCG back offices reconcile receipts that cannot physically survive humidity, accidental spills, or simple passage of time affecting paper quality through its travels. These operational realities exist across organisations that would never admit it publicly whilst simultaneously announcing AI transformation initiatives to shareholders and customers expecting innovation leadership and competitive advantage.

Before automation becomes reliable at scale, someone needs to do unglamorous foundational work nobody budgeted for properly because it lacks the excitement of AI announcements. Standardise document formats across suppliers and internal departments who all developed their own preferred approaches. Define required fields and validation rules that actually work in messy reality where data quality varies wildly. Decide who owns which data across departments and what “clean data” actually means in practical terms everyone can agree on and maintain consistently. Establish retention policies satisfying both legal requirements and practical retrieval needs when someone needs historical information quickly. Digitise archives going back years with inconsistent formats and quality standards that evolved organically. Reconcile historical records lacking proper documentation or governance frameworks because nobody thought it mattered enough to invest in properly at the time decisions were made.

This is why AI integration often requires months or years to implement successfully in ways that actually deliver promised value. Teams are not slow or resistant to change as leadership sometimes assumes during frustrated strategy sessions. Legacy processes were genuinely never designed for automation from the beginning when systems were built. Historical records were never treated as strategic assets requiring structure, consistency, and maintenance discipline embedded in operations. Data hygiene was never established as operational practice with accountability and metrics. It was postponed because other priorities seemed more urgent in quarterly planning cycles focused on visible deliverables. AI is exceptionally good at magnifying consequences of that historical neglect by scaling small problems into massive systemic issues almost instantly across everything the system touches operationally. Understanding why marketing technology fails without clear strategy helps you avoid repeating expensive deployment mistakes that damage credibility and waste resources.

What Governance Actually Needs to Cover in Practice

A governance strategy matching AI deployment reality should answer these questions clearly and confidently under pressure from stakeholders, regulators, or auditors who show up unexpectedly. What can the system do autonomously without human intervention or approval gates? What actions require explicit human approval before execution affecting customers or operations? What data can it access and what is strictly off-limits regardless of efficiency arguments? How are decisions and actions logged with timestamps and attribution showing who did what? How are errors detected, handled, and escalated to appropriate teams with authority to intervene? Who owns outcomes when systems make mistakes affecting customers or business operations in measurable ways? How do you restore trust when automated decisions damage relationships or violate expectations stakeholders held?

If you cannot explain workflows clearly under scrutiny from people who do not understand technical details, you do not have governance you can defend. You have hope combined with good intentions, and neither passes regulatory audits or satisfies anxious stakeholders when trust breaks publicly through incidents affecting customers or compliance standing. Data hygiene becomes board-level strategic concern, not just IT operational responsibility that gets delegated and forgotten until problems surface. Duplicated records, inconsistent field standards, conflicting definitions across departments, and undocumented approval chains become automation failure points multiplying risk exponentially when systems scale across the organisation touching more processes and data.

AI forces organisations to confront process inconsistencies and data quality issues they could comfortably ignore before automation exposed them publicly through customer-facing errors or compliance violations that trigger investigations. Proper deployment requires time investment primarily because most organisations accumulated years or decades of technical debt and governance gaps never addressed systematically when manual workflows could absorb the messiness through human judgement and institutional knowledge that never got documented properly or transferred when people left. The risk surfaces later when organisations cannot answer fundamental questions regulators or auditors ask during investigations or routine compliance reviews. Where did customer data go after processing through unapproved systems? Who had access to sensitive information that should have been protected? What decisions were made using which data sources and validation processes? Who is accountable when something breaks or violates compliance obligations unknowingly? A more useful approach treats shadow AI as valuable governance signal rather than security scandal requiring punishment without understanding root causes. If teams depend on unapproved tools to deliver business-critical outcomes consistently, leadership needs to either formalise them properly with appropriate controls and documentation, or replace them with approved alternatives genuinely meeting workflow needs without creating bottlenecks. Punishing people without fixing underlying pressure and resource constraints just drives tool usage further underground where it becomes harder to detect and manage effectively whilst risk continues growing. High dependency means you need controlled transition paths maintaining productivity, not sudden bans forcing teams into even riskier workarounds or breaking critical workflows entirely. Ethical AI decision frameworks help balance innovation velocity with responsible deployment practices that protect both the business and the people doing the work under pressure.

The Human Layer Remains Critical and Non-Negotiable

Agentic systems act efficiently within defined parameters, and business rules someone programmed carefully based on assumptions that may not hold under stress. Accountability needs human faces, documented decision processes showing how conclusions were reached, and visible ownership behind it that stakeholders can engage with directly. When things go wrong in ways affecting customers or regulatory standing, “the tool made a mistake” or “the AI got confused by edge cases” does not restore stakeholder trust or satisfy regulators investigating what happened, and who failed to prevent it through proper oversight. Trust rebuilds through visible ownership, fast honest correction acknowledging what went wrong without deflection, clear evidence of control and learning from failures to prevent recurrence, and genuine commitment to preventing similar issues going forward backed by concrete actions. Understanding how CEOs can use AI to improve crisis communications becomes increasingly vital as automation scales, and reputational risk compounds across everything the systems touch operationally.

Building Governance-First Automation That Actually Works

HBR’s finding is not a warning against AI adoption or innovation in general that drives competitive advantage. The research is a warning against naive automation ignoring governance requirements until deployment creates expensive problems, requiring months to resolve properly whilst damaging trust and credibility. AI changes work by increasing output capacity whilst multiplying oversight requirements for keeping that output safe, accurate, and aligned with business values and regulatory obligations. If organisations treat governance as afterthought or compliance checkbox to tick during implementation, intensified work becomes intensified friction slowing everything down whilst frustrating teams. People do more across more systems, monitor more dashboards showing metrics they struggle to interpret, coordinate more across departments with conflicting priorities, and still feel perpetually behind schedule, and underwater despite working longer hours.

The mature approach is governance-first, automation-supported deployment respecting both AI potential and realistic limitations that vendors do not emphasise during sales cycles. Digitise processes with proper structure and consistency that humans and systems can both understand reliably. Clean data and establish hygiene protocols preventing garbage from entering systems in the first place where it multiplies across processes. Define ownership clearly so everyone knows who is responsible for what outcomes and decisions. Build audit trails capable of surviving regulatory scrutiny and stakeholder questions under pressure when trust is tested. Treat shadow AI as valuable workflow signal revealing where approved tools fail to meet real needs, not scandal requiring punishment without understanding root causes driving adoption.

AI genuinely helps organisations operate with better intelligence, improved responsiveness to market changes, and meaningful scale that creates competitive advantages when implemented thoughtfully. The value only shows up when accountability is designed into workflows from the beginning as foundational architecture, not stapled on desperately after deployment damages trust through preventable failures. Because in 2026, competitive differentiation is not how much your systems produce or how fast you automate workflows compared to competitors. Real differentiation is whether your organisation can explain what systems did, why they made specific decisions, and who is accountable for outcomes, whilst still sounding credible, trustworthy, and competent when it matters most to the people whose confidence you need to maintain.

Frequently Asked Questions (FAQ)

Internal Articles

- How Brands Build Human Trust in the Age of Agentic AI, Starting in 2026

- Vibe Coding Is Rewriting Digital Services: What Agencies, SaaS, and Marketers Must Do Next

- The AI Productivity Paradox in 2025

- Digital Trust in 2025: Governance and Security Shaping the Next Economy

- Data Quality is the Power Move Behind Every Winning AI Strategy in 2025

- Agentic AI in 2025: Ripples that Signal the 2026 Workflow Tsunami

- Beyond the Prompt: What Generative AI Really Means for Your Business, Brand and Future

- The B2B Reset: Why 2025 Belongs to Strategic Thinkers, Lean Tech, and Transparent AI

- How Can CEOs Use AI and Leadership to Improve Crisis Communications in 2026?

- Why More Than 90% of AI Pilots Fail and How Hyper-Personalisation Wins

Sources Referenced

- Harvard Business Review (February 2026): “AI Doesn’t Reduce Work. It Intensifies It.” (HBR’s 2026 Governance Research)

- Microsoft Work Trend Index (2025): workload pressure, oversight requirements, and AI adoption patterns

- Gartner AI Governance Framework (2025): enterprise governance best practices and risk assessment

- ISO/IEC 42001:2023: international standard for AI management systems

- UK Information Commissioner’s Office (ICO): AI auditing framework and accountability guidance

Visual Content Disclaimer: All images in this post are AI-generated.

AI Intensifies Work and Multiplies Risk According to HBR’s 2026 Governance Research

#LadyinTechverse #AIGovernance #AgenticAI #DigitalTransformation #WorkflowAutomation #TrustInTech #AIStrategy #DataGovernance #EnterpriseAI

Leave a Reply